Talking Dirty to Your Terminal: Voice + AI Without the Chair

Modern speech recognition crossed ninety-five percent accuracy years ago. The technology was ready. What was missing was an AI on the other end that could do something useful with your words. Build a function. Refactor a module. Diagnose a stack trace. That missing piece has arrived, and it changes the fundamental interface between a developer and a machine.

Claude Code ships with a voice command built in for terminal users. For everyone else, Whisperflow converts speech to text system-wide. Drop your voice into VS Code's Copilot chat, Cursor's AI panel, JetBrains AI Assistant, or anywhere your cursor blinks. ChatGPT runs voice mode on your car dashboard. These are not prototypes or concept demos. They are production tools, shipping today, and together they open a door that the keyboard has kept closed for decades.

Speaking a prompt is undeniably faster than typing one. But the real quality difference runs deeper than stopwatch measurements. When you talk, you naturally include more context. More detail. More of what you actually mean beneath the surface of the words. You ramble into clarity. You circle back to an earlier point. You add the kind of ambient richness that a typed prompt, constrained by the physical effort of keyboard input, strips away before it ever reaches the model. The AI does not mind the extra words. It does not get bored. It uses everything you give it, and the output improves because the input was fuller.

But speed is not the real story here. The real story is what happens when the keyboard is no longer the only path between your mind and the machine.

Software engineering has always been a sedentary profession. We sit. We hunch. We stare at screens for hours and convince ourselves this is normal because everyone around us is doing the same thing. Voice breaks that pattern entirely. Go for a walk and discuss a system design with an agent. Drive to the store talking through a specification and arrive with a first draft waiting in your inbox. Pace your living room working through a problem out loud, and the simple act of explaining it, of hearing yourself articulate the edges of the problem, often reveals the solution before the AI even responds. The old programmers called this rubber ducking. The new version has the duck talking back, and it is genuinely useful. You solve problems faster not because the AI is smarter than you, but because speaking forces you to clarify your own thinking.

There is a subtler shift happening alongside the physical one. Typing rewards precision. It favors careful phrasing and deliberate structure, because fixing a badly typed prompt costs keystrokes and breaks your flow. Speaking rewards flow.

When you talk through a problem with an AI agent listening, you spend less time crafting the perfect prompt and more time actually thinking about the problem itself.

The agent stops being a prompt box and starts being a collaborator. You think out loud. It listens. You iterate verbally the way you would with a colleague standing at a whiteboard. You arrive at clarity together, and neither of you was sitting down for any of it.

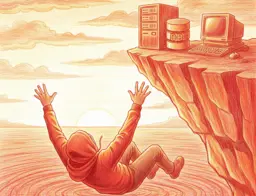

None of this means throwing away your keyboard. Some tasks still call for precision, for silence, for the deliberate act of placing each character where it belongs. But the keyboard is no longer the only interface, and that changes the shape of the day. The walk, the drive, the moment away from the screen, those can all now be part of how you build. Not because you are squeezing more work into every spare minute, but because the way you work has finally caught up with how you actually think. You were never meant to be chained to a desk in the first place.

You can talk to your tools now. You can build software standing up, walking away, looking at something other than a screen. That is not a productivity hack. That is a better way to work.